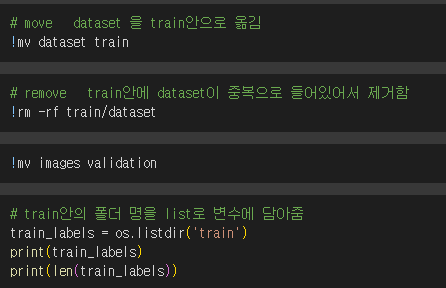

1. 포켓몬 분류

* Validation: https://www.kaggle.com/hlrhegemony/pokemon-image-dataset

import os

os.environ['KAGGLE_USERNAME'] = '캐글 닉네임'

os.environ['KAGGLE_KEY'] = '캐글 키'

# train data

!kaggle datasets download -d thedagger/pokemon-generation-one

!unzip -q /content/pokemon-generation-one.zip

# validation data

!kaggle datasets download -d hlrhegemony/pokemon-image-dataset

!unzip -q /content/pokemon-image-dataset.zip

import torch

import torch.nn as nn

import torch.optim as optim

import matplotlib.pyplot as plt

from torchvision import datasets, models, transforms

from torch.utils.data import DataLoader

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print(device)

data_transforms = {

'train' : transforms.Compose([

transforms.Resize((224, 224)),

transforms.RandomAffine(0, shear = 10, scale = (0.8, 1.2)),

transforms.RandomHorizontalFlip(),

transforms.ToTensor()

]),

'validation' : transforms.Compose([

transforms.Resize((224, 224)),

transforms.ToTensor()

])

}

image_datasets = {

'train' : datasets.ImageFolder('train', data_transforms['train']),

'validation' : datasets.ImageFolder('validation', data_transforms['validation'])

}

dataloaders = {

'train' : DataLoader(

image_datasets['train'],

batch_size = 32,

shuffle = True

),

'validation' : DataLoader(

image_datasets['validation'],

batch_size = 32,

shuffle = False

)

}

imgs, labels = next(iter(dataloaders['train']))

fig, axes = plt.subplots(4, 8, figsize = (16, 8))

for ax, img, label in zip(axes.flatten(), imgs, labels):

ax.imshow(img.permute(1, 2, 0))

ax.set_title(label.item())

ax.axis('off')

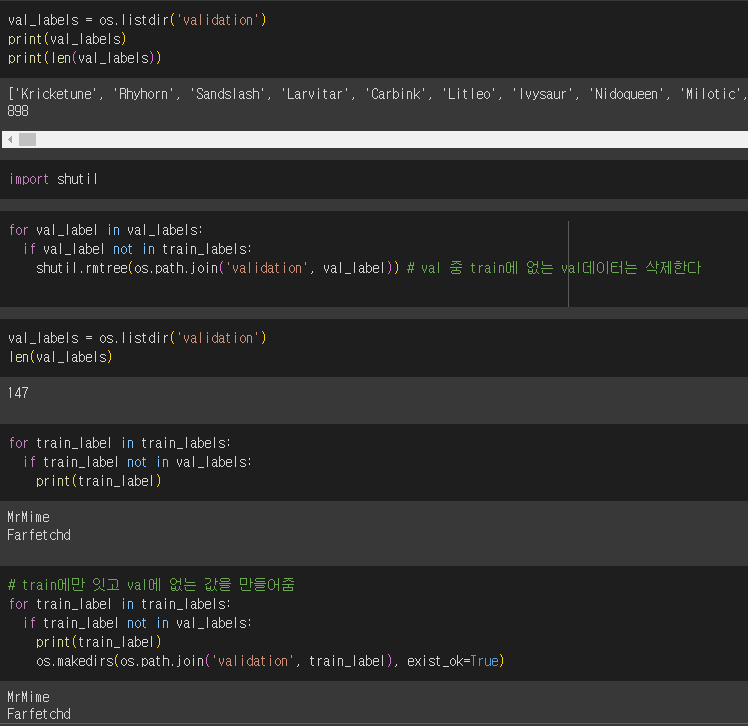

2. EfficientNet

* 구글의 연구팀이 개발한 이미지 분류, 객체 검출 등 컴퓨터 비전 작업에서 높은 성능을 보여주는 신경망 모델

* 신경망의 깊이, 너비, 해상도를 동시에 확장하는 방법을 통해 효율성과 성능을 극대화한 것이 특징

* EfficientnetB4는 EfficientNet 시리즈의 중간 크기 모델

from torchvision.models import efficientnet_b4, EfficientNet_B4_Weights

from torchvision.models._api import WeightsEnum

from torch.hub import load_state_dict_from_url

def get_state_dict(self, *args, **kwargs):

kwargs.pop("check_hash")

return load_state_dict_from_url(self.url, *args, **kwargs)

WeightsEnum.get_state_dict = get_state_dict

model = efficientnet_b4(weights=EfficientNet_B4_Weights.IMAGENET1K_V1).to(device)

model

# 모델의 parameter들을 수정되지 않게

for param in model.parameters():

param.requires_grad = False

# 모델 아래의 Sequential 부분을 아래 내용으로 수정

model.classifier = nn.Sequential(

nn.Linear(1792, 512),

nn.ReLU(),

nn.Linear(512, 149)

).to(device)

print(model)

# 학습 gpu 이슈로 주석처리하고 파일 다운

# optimizer = optim.Adam(model.classifier.parameters(), lr = 0.001)

# epochs = 10

# for epoch in range(epochs) :

# for phase in ['train', 'validation'] :

# if phase == 'train' :

# model.train()

# else:

# model.eval()

# sum_losses = 0

# sum_accs = 0

# for x_batch, y_batch in dataloaders[phase] :

# x_batch = x_batch.to(device)

# y_batch = y_batch.to(device)

# y_pred = model(x_batch)

# loss = nn.CrossEntropyLoss()(y_pred, y_batch)

# if phase == 'train':

# optimizer.zero_grad()

# loss.backward()

# optimizer.step()

# sum_losses = sum_losses + loss

# y_prob = nn.Softmax(1)(y_pred)

# y_pred_index = torch.argmax(y_prob, axis =1)

# acc = (y_batch == y_pred_index).float().sum() / len(y_batch) * 100

# sum_accs = sum_accs + acc

# avg_loss = sum_losses / len(dataloaders[phase])

# avg_acc = sum_accs / len(dataloaders[phase])

# print(f'{phase :10s}: Epoch {epoch+1:4d}/{epochs} Loss: {avg_loss:.4f} Accuracy: {avg_acc:.2f}%')

# 학습된 모델 파일 저장

torch.save(model.state_dict(), 'model.pth')

# pth pytorch의 약어 / model.h5로 저장하는 경우는 tensor flow에서도 파일을 열기 위해서

# 가중치없고 학습이 안된 모델을 가져온 상태 (parameter 개수만 수정함)

model = models.efficientnet_b4().to(device)

model.classifier = nn.Sequential(

nn.Linear(1792, 512),

nn.ReLU(),

nn.Linear(512, 149)

).to(device)

print(model)

# model.pth에서 학습했던 내용(데이터)을 모델에 넣어줌

model.load_state_dict(torch.load('model.pth', map_location=device ))

model.eval()

from PIL import Image

img1 = Image.open('validation/Snorlax/4.jpg')

img2 = Image.open('validation/Diglett/0.jpg')

fig, axes = plt.subplots(1, 2, figsize=(12, 6))

axes[0].imshow(img1)

axes[0].axis('off')

axes[1].imshow(img2)

axes[1].axis('off')

plt.show()

img1_input = data_transforms['validation'](img1)

img2_input = data_transforms['validation'](img2)

print(img1_input.shape)

print(img2_input.shape)

test_batch = torch.stack([img1_input, img2_input])

test_batch = test_batch.to(device)

test_batch.shape

y_pred = model(test_batch)

y_pred

y_prob = nn.Softmax(1)(y_pred)

y_prob

# topk 위에서 몇개를 뽑을지 / max는 최댓값만 뽑게

probs, idx = torch.topk(y_prob, k=3)

print(probs)

print(idx)

fig, axes = plt.subplots(1, 2, figsize=(15, 6))

axes[0].set_title('{:.2f}% {}, {:.2f}% {}, {:.2f}% {}'.format(

probs[0, 0] * 100,

image_datasets['validation'].classes[idx[0, 0]],

probs[0, 1] * 100,

image_datasets['validation'].classes[idx[0, 1]],

probs[0, 2] * 100,

image_datasets['validation'].classes[idx[0, 2]],

))

axes[0].imshow(img1)

axes[0].axis('off')

axes[1].set_title('{:.2f}% {}, {:.2f}% {}, {:.2f}% {}'.format(

probs[1, 0] * 100,

image_datasets['validation'].classes[idx[1, 0]],

probs[1, 1] * 100,

image_datasets['validation'].classes[idx[1, 1]],

probs[1, 2] * 100,

image_datasets['validation'].classes[idx[1, 2]],

))

axes[1].imshow(img2)

axes[1].axis('off')

'딥러닝과 머신러닝' 카테고리의 다른 글

| Transfer Learning, Image Agumentation (2024-06-24) (1) | 2024.06.24 |

|---|---|

| 간단한 CNN model 만들기 (2024-06-20) (0) | 2024.06.20 |

| CNN(Convolutional Neural Networks) (2024-06-20) (0) | 2024.06.20 |

| Activation Functions, Backpropagaion(2024-06-20) (0) | 2024.06.20 |

| neuron, Perceptron (2024-06-20) (0) | 2024.06.20 |